Cuda dim3 grid5/5/2023

I would be clear where the configuration of the threads has been defined, and the 1D, 2D and 3D access pattern depends on how you are interpreting your data and also how you are accessing them by 1D, 2D and 3D blocks of threads. To use a dim3 as a grid dimension, leave out the last argument or set it to one. Both blocks and grids use this type even though grids are 2D. You can declare dimensions like this: dim3 myDimensions (1,2,3), signifying the ranges on each dimension. To sumup, it does it matter if you use a dim3 structure. CUDA provides a handy type, dim3 to keep track of these dimensions. Int y = blockIdx.y * blockDim.y + threadIdx.y īecause blockIdx.y and threadIdx.y will be zero. Unified memory is used on NVIDIA embedding platforms, such as NVIDIA Drive series and NVIDIA Jetson series. So, in both cases: dim3 blockDims(512) and myKernel>(.) you will always have access to threadIdx.y and threadIdx.z.Īs the thread ids start at zero, you can calculate a memory position as a row major order using also the ydimension: int x = blockIdx.x * blockDim.x + threadIdx.x The same happens for the blocks and the grid.

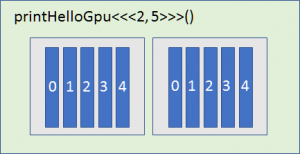

When defining a variable of type dim3, any component left unspecified is initialized to 1. However, the access pattern depends on how you are interpreting your data and also how you are accessing them by 1D, 2D and 3D blocks of threads.ĭim3 is an integer vector type based on uint3 that is used to specify dimensions. The memory is always a 1D continuous space of bytes. Each CUDA block is executed by one streaming multiprocessor (SM) and cannot be migrated to other SMs in GPU (except during preemption, debugging, or CUDA dynamic parallelism). The way you arrange the data in memory is independently on how you would configure the threads of your kernel. A kernel is executed as a grid of blocks of threads (Figure 2).

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed